Quick Start

Getting started with LangStream on Mac

Getting started on Mac with LangStream is easy. You need to have Java, Homebrew and Docker installed. Then follow these steps:

1. Install LangStream using Homebrew

brew install LangStream/langstream/langstream

2. Set OpenAI key in an environment variable

export OPEN_AI_ACCESS_KEY=your-key-here

3. Start LangStream application using Docker

langstream docker run test \

-app https://github.com/LangStream/langstream/blob/main/examples/applications/openai-completions \

-s https://github.com/LangStream/langstream/blob/main/examples/secrets/secrets.yaml

This will download the openai-completions example from the LangStream repository and run it using the default secrets file, which expects the secrets in environment variables. The application provides a complete backend for a chatbot that uses OpenAI’s GPT-3.5-turbo to generate responses. It includes a WebSocket gateway for easy access to the streaming AI agents from application environments including web browsers.

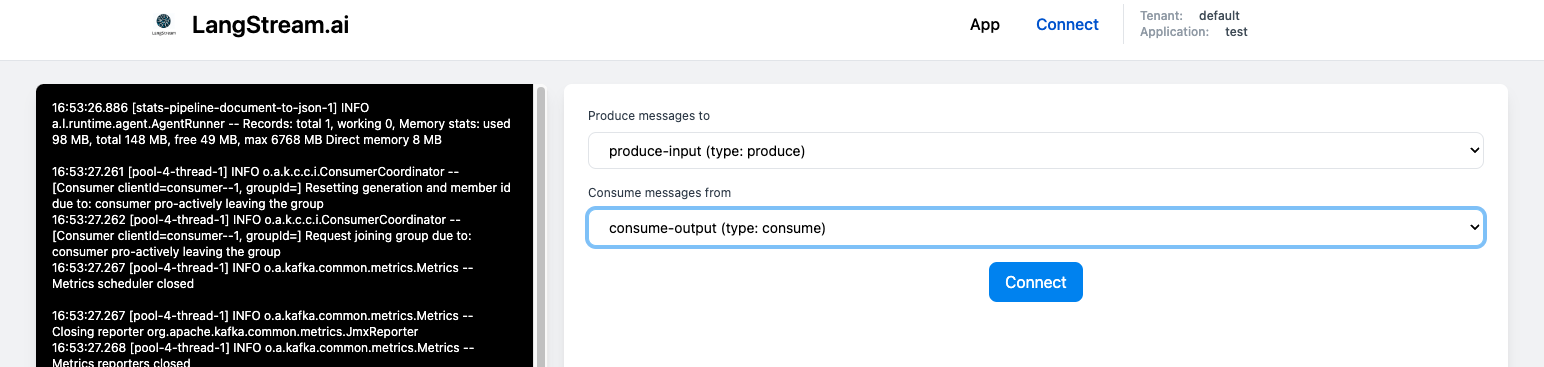

4. Connect to the WebSocket gateway using the UI

After LangStream intializes, it automatically opens the test UI in your brower. Click on the Connect button to connect to the gateway.

Alternatively, you can use the text-based client to connect to the gateway. Run the following command to in a different terminal to start the client:

langstream gateway chat test -cg consume-output -pg produce-input -p sessionId=$(uuidgen)

The gateway supports multiple concurrent users by specifying a unique sessionId for each.

5. Chat with the bot

Type a question in the Message box and hit Enter. The response will be streamed in real-time as it is generated by the LLM.