Build Q&A chat over unstructured text in minutes

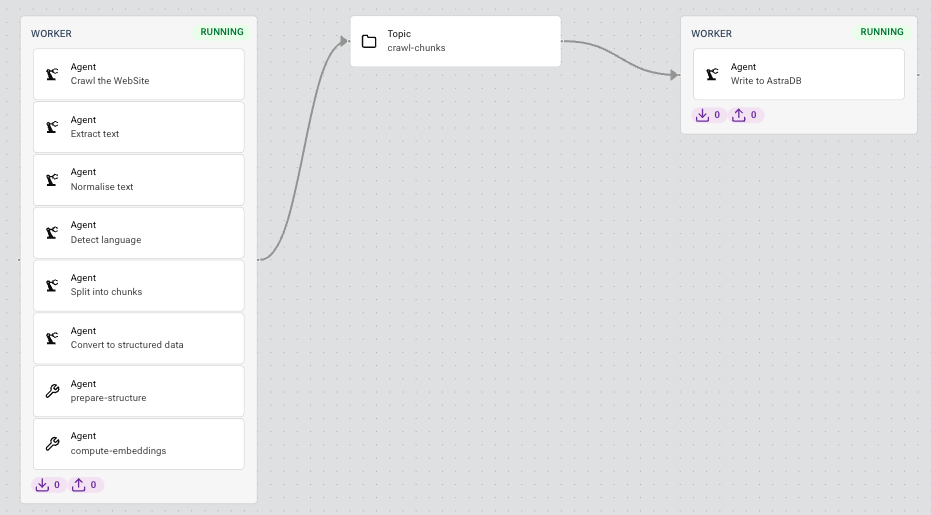

Using no-code agents, you can create text-to-vector-database pipelines and prompt templating agents for a variety of LLMs.

Use the latest Gen AI technologies

LangStream integrates with LLMs from OpenAI, Google Vertex AI, and Hugging Face, Vector databases like Pinecone and DataStax, Python libraries like LangChain and LlamaIndex.

Manage embeddings in your vector database

Create pipelines that automatically create and update embeddings in your vector database for unstructured data (PDFs, Word documents, HTML) using proprietary and open-source embeddings models.

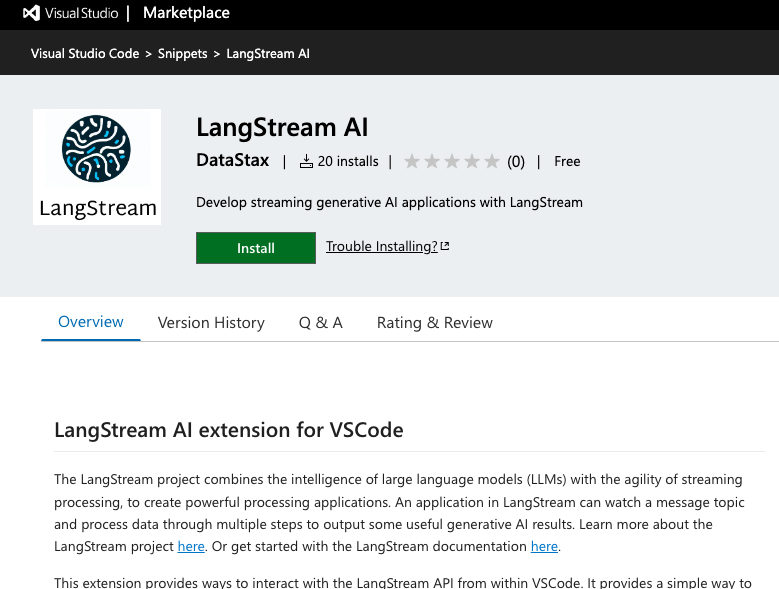

Code and deploy in Visual Studio Code

To make it easier to build LangStream apps, we have created a VS Code extension that includes app deployment, app updating, log analysis, and code snippets.

Declare an app and deploy it to dev or prod

In LangStream an application consists of pipelines defined using configuration files or Python. Deploy to a local development environment or to a production environment, powered by proven tech: Kubernetes and Kafka.

Bring your existing data to the LLM

LangStream can tap into the data you already have when engineering a prompt to send to the LLM. Query SQL and no-SQL databases or use Kafka Connect connectors to access the data you already have.

Fix your day 2 problems with an event-driven architecture

LangStream is a streaming framework using an event-driven architecture meaning it is asynchronous, decoupled, fault tolerant, and scalable.